Committer's note: This was originally written by Paul Herman in January 2004. He has graciously allowed it to be posted on the DragonFly site.Introduction

time sleep 1never returns exactly 1 second? You might know the answer, but if you think you do, you might also be wrong.

Accurate time keeping and event triggering is becoming more and more affordable as PC hardware becomes increasingly faster. Network communication is now a matter of microseconds and system call round trip times are accomplished within nanoseconds. This document examines the accuracy of the nanosleep()/tsleep() kernel functions under DragonFly BSD 1.0-CURRENT.

Problem

Suppose we wish to trigger an event at an exact time on the clock, i.e.

every second just as the second hand moves. In pseudo code, we wish to do

something like:

forever now = look_at_clock() s = next second - now sleep(s) trigger_event() end

So, if our clock reads 10:45:22 and the second hand reads 22.874358, then by the time our sleep function wakes up, it should be exactly 10:45:23. Similarly, the next call to trigger_event() will happen at exactly 10:45:24. So much for the theory, let's try this in the real world and see how close we can get.

Observations

I quickly coded up wakeup_latency.c which sleeps until the next

second and then prints the difference in seconds between the actual time and the

time expected. On a GHz machine, I expected this to be very accurate, but to my

astonishment, I got the following:

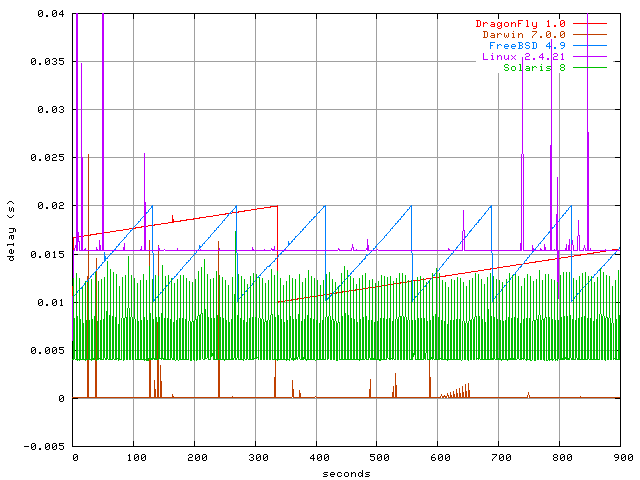

0.019496 0.019506 0.019516 0.019525 0.019535i.e. 19ms later than expected! This is is nearly twice the default system tick length of 10ms based on 100 Hz!! That is unexpected and certainly undesireable. I then ran the program on:

Well, there are quite a few things to notice here indeed.

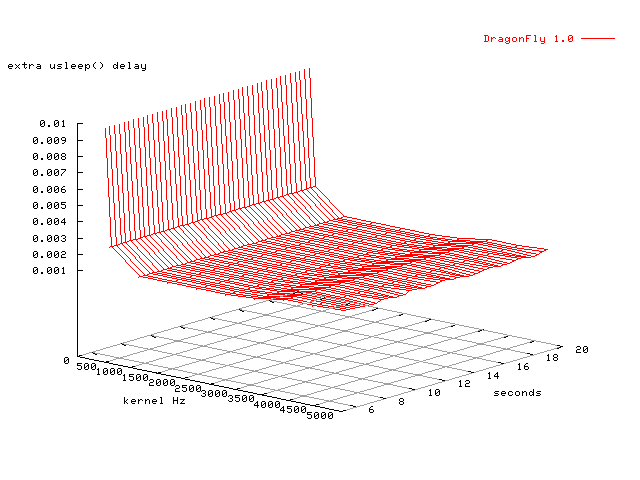

What happens when you keep the OS & hardware constant and change the Hz?

A good question with even more unexpected results.

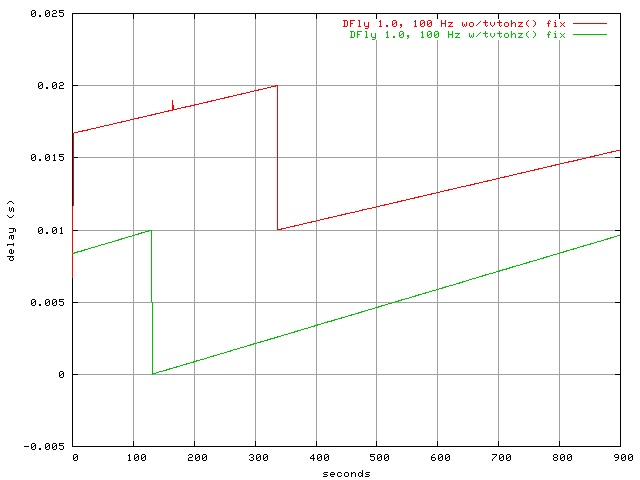

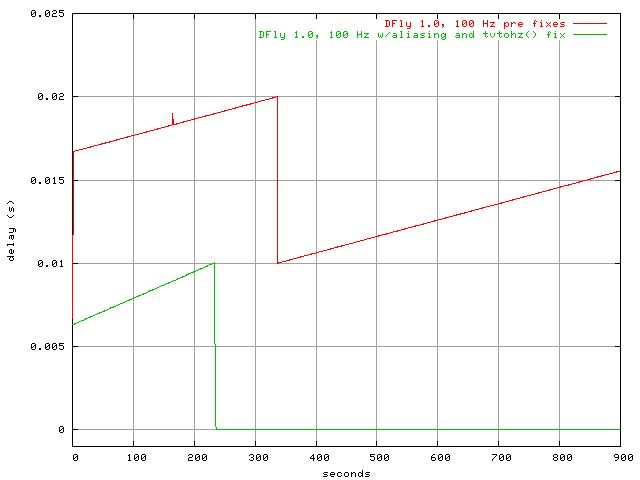

This following figure shows what happens on DFly 1.0.

Now that we've characterized the problem, let's see if we can solve it.

Solutions?

The first place I looked was kern/kern_time.c:nanosleep(). Although

it does truncate the precision of the request from nanoseconds to microseconds (and then from

microseconds to ticks via tvtohz()), that alone shouldn't account for the differences

we are seeing. However, a closer examination shows a bug in tvtohz()

(kern/kern_clock.c rev 1.12).

With hz=100 and ticks=10000, I get:

| seconds | theoretical ticks rint(seconds*Hz) | tvtohz() |

| 0.900000 | 90 | 91 |

| 0.990000 | 99 | 100 |

| 0.999000 | 100 | 101 |

| 1.000000 | 100 | 101 |

| 1.000001 | 100 | 101 |

| 1.000002 | 100 | 102 |

| 1.001000 | 100 | 102 |

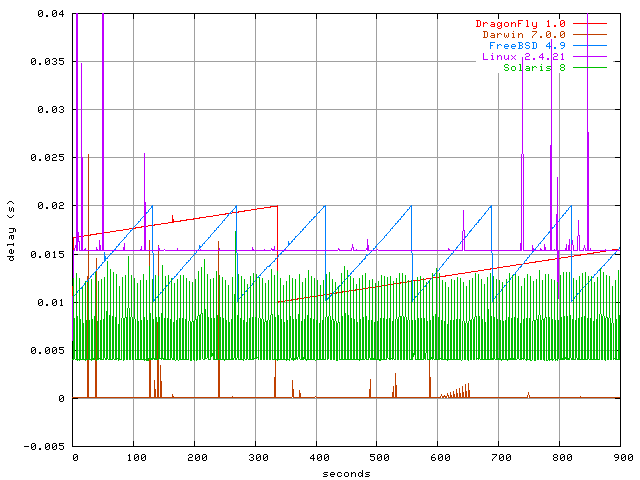

This change was apparently introduced into FreeBSD rev 1.11 in 1994. This should be corrected, and the bug that it was meant to fix (sleep(1) exiting too soon) should be properly fixed. I did with this patch, and now our usleep() delays look a bit better:

Clock Aliasing

So what about the sawtooth patterns? The kernel clock shouldn't behave that way,

and something is definitely rotten in Denmark.

A keen eye will see in the last figure that the change to tvtohz() produced a slightly steeper or quicker frequency of the sawtooth wave. What does this mean? Well, now that for any given timeval, tvtohz() returns fewer ticks, the system requires fewer ticks in order to cover the same time span, or to put it differently, fewer "microseconds" to cover the same wall clock time, we have effectively (at least from the view of the Hz timer) sped up the system clock. Interesting. It seems the Hz timer is out of sync with the system hardware clock producing aliasing. This is not unlike the aliasing you see in movies when automobile wheels appear to turn backwards. In that case, the camera shutter frequency is slightly out of sync with the turning of the wheels.

To confirm that our "wheels" our indeed out of sync, I ran the same program I've been using all along, and at the same time tinkered with the clock frequency using ntptime [ -f freq ] (like adjusting the speed of the car.) Sure enough, I was able to tame the observed delays any way I pleased. Other evidence of a software error is that the Hz timer is clocked off of the i8254 clock which is the same clock as the hardware timecounter, i.e. the i8254 based timecounter appears to run faster than the i8254 Hz timer! There must be a software bug.

Upon further investigation, Matt Dillon suggested that the drift could be caused by the cumulative effect of a system tick not being an integral multiple of an i8254 timer tick, which in effect speeds up the Hz timer to a speed that is faster than it should be. The solution was to periodically slow down the Hz timer by a small fraction so that on the average, the Hz timer would tick exactly as it should.

This initial change produced very promising results, which led to Matt to improve the design and develop a PLL loop in clkintr() which slowly adjusts the frequency of the Hz timer against the wall clock timer (be it i8254 or TSC) to get it as close to the target Hz as possible. This change can is refelcted in Revisions 1.8 - 1.10 in clock.c. A nice side effect of this change is that because the Hz timer is skewed against the wall clock, any clock disciplining done to the wall clock time (by ntpd, for example) will also adjust the Hz rate accordingly.

The improvement is reflected in the following figure using the same old wakeup_latency.c tests (with slight changes to improve precision.) Due to the nature of the PLL which takes a few minutes to stablize, one can see that there is clock aliasing until a lock on the frequency is attained. After that, the Hz timer appears very accurate, indeed.

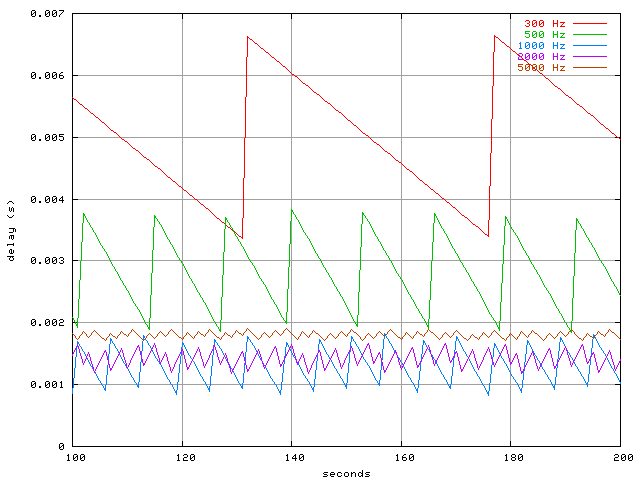

Here is a closer look, both with the PLL patch at different Hz rates (using the TSC as the wall clock timecounter.)

There is some slight improvement with higher Hz rates, but both seem to perform very well now, generally staying within 10 microseconds of the expected time (about a thousand times better than some other operating systems.) At this fine resolution, you can even see how other processes affect latency, such as the periodic syncer daemon. The difference is like looking through the hubble telescope rather than binoculars.

As with everything, answering some questions will potentially open up new ones. One can naturally ask "why are we limited to around 10 microseconds when the i8254 has about an 0.8 microsecond resolution?", but the changes already made represent a significant improvement in the standard Hz timer, and one can only be pleased with the results.

In conclusion, we see that after asking a rather academic question such as "why isn't sleep very accurate?" which may have little practical application, some minor deficiencies were uncovered that potentialy have very practical applications, such as accurate bandwidth shaping as measured against a well disciplined wall clock.

Appendix: Still Questions?

Finally, I'd like to stave off some possible questions you may have at this point.

Q: Why don't you renice process to a higher priority so the process will

wake up sooner?

A: I did, no difference.

Q: That's easy to explain, gettimeofday() is a system call, and system calls

have lots of overhead. That's why it takes so long.

A: Nope. I've found that clock_gettime() takes only a total of

about 500-800 nanoseconds when called from userland on a GHz machine, and

nanotime() takes only about 60 ns when called directly from inside the kernel.

Q: Just increase kern.hz and your delay will go away.

A: No they won't. Sure, usleep() will return faster, but there is still a definite delay problem.

See the pretty graphs above.

Q: Use the TSC timer instead of the i8254. It has a much higher resolution.

A: I tried both, no difference.